An elephant in the augmented room!?

Honestly, the headline is not true. Everybody would love to talk about the elephant, but we just know too little about it… What? Exactly. I talk about the AR start-up from Florida called Magic Leap, that just received a huge investment check from Google and others to develop… something. They claim to be onto something really, really big that changes the usage and quality of everday AR completely. But what do they really offer?

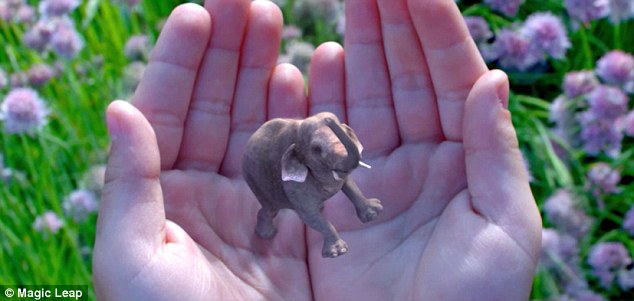

Let’s collect the puzzle pieces… Engagdet, NY Times, et al. are all reporting on the mysterious start-up that shows some really nice stills and a short video (of the elephant starting to float from the user’s hand) that let us get the impression that they really made a break-through in inserting the augmented CG imagery seamlessly into the real world. The AR objects have correct (or convincing) lighting and shadows, match the tone of the colors, the user’s hand occlude the object partially and lens looks realistic with it’s depth of field, etc. At a glance a perfectly integrated real-time Augmentation… and everybody panics in delight.

But, so far we only see well made marketing material not knowing more about the actual technology to judge. Magic Leap calls their technology “dynamic digitized lightfield signal” to get realistic renderings blending in perfectly. They teamed up with movie companies like Legendary Pictures or Weta Workshop, so those guys know a thing or two about blending in CGI. Other partners like Microelectronics Research gives us more speculations…

Magic Leap leaves many open questions and lists as many open positions: ranging from opto-mechanical engineers (for development of glasses? / lenses?), embedded system developers (tiny device designs?), tracking/computer vision specialists (do they create a new approach for huge outdoor markerless 3D tracking?), Android developers (will all run on Android?), Unity (will all rendering happen in Unity? (Is Unity powerful enough?)), audio & game developers… seems we could get a first demonstrator in form of a “first person shooter on a world-changing new platform in an effort to defend Earth from robotic overthrow”! Neat!

A good summary on the media attention and quotes comes from NewsyTech:

All bits and pieces for realistic rendering (guesstimating color & light info from some camera-video feed or additional spherical HDR cameras, etc.) I can believe as well as improved tracking and occlusion handling. Here I can have trust in these guys with their big funding… but the more interesting and unknown part is the output device… will it be glasses? An HMD or lenses? It must be light-weight, work in stereo, have a huge field of view and finally address the focus/convergence problems we have today: the user must be able to focus/converge in the real world and get a correct CGI focus with matching distance feeling along the way. We dont want to have the feeling of a “virtual screen in 10 feet distance” or similar as many optical-see-through-HMDs have today. We rather want a full-field-of-view-covering immersion & blend for AR in stereo with eye tracking and a perfect CG overlay. Maybe their technology will get us there (compare nvidia’s publications on it). Cinematic AR with full field of view and free focus – this would really be a break-through!

But today we cannot judge about a single piece. We only know that the big investors seem to trust those guys (and the investors must have way more insight today) and that the long list of hires is pretty damn huge and it appears that they know what they are doing. I’ve tried to get in touch with the Magic Leap guys (if you read this, please drop me a mail! :-)), so let’s see when more infos and new video demos will be available. The current demos are fun, but typically floating objects demos are used to avoid revealing tracking errors. Also we don’t know about real-time performance from a video. At last, no output device seen yet. Darn!

But I can’t wait for more and I cross my fingers that the world is onto something huge here!