The next 10 years – all through augmented windows?

How will real augmented reality hit the mass market? Which system will take the lead? … and I am not talking about Microsoft Windows here, I used a lowercase “W”. It´s rather about the devices than the brand or operating system today. Will we all wear tiny AR glasses soon or will we stay with a hand-held or fixed-position augmented windows metaphor for a longer while, let`s say, 10 more years?

The Augmented Window

My first AR experiences I compiled myself was the magic book and the snowman ARToolkit demo on a hiro marker. I had to use a webcam on a long cable to walk around the marker and then twist my head to watch the result on a near-by screen. Movement was limited and obviously usefulness of this setup is equal to zero. But it was good fun and laid the base for all my carreer. I was excited and wanted my AR glasses on the spot!

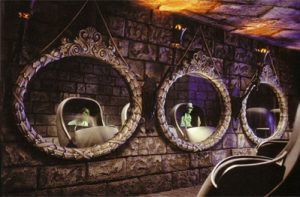

But then reality hit me and made me (and us all) realize that it`s still a bit sci-fi as of today (back then). Other concepts filled the gap: the augmented mirror was seen a lot: people stand in front of a big screen, pretending to be a mirror (showing the flipped camera image). Many successful demos hit the stores to try on sunglasses, check the content of a LEGO box or have some other virtual clothing and try-ons besides all that marketing effect demos.

The mirror carried the Wow-effect nicely, but did only let us observe reality through pixel copies. Video-see-through is kind of annoying. Unlike in Futurama, television will never have better resolution than the real world. Optical concepts showed up more and “holographic” mirror demos could be seen on every fair, just placing a screen face-down on a pyramid of glass and mirrors or bringing TuPac back to stage. Optical overlays that don`t need digital devices worn by the user can be very helpful and are obviously easy to use. Back in Disneyworld I experienced it as a child already – using the very same mirror trick to place ghosts in my cart or dancing in the hall.

Fun, but probably not useful for real life “productive environments”? The company Realfiction just presented their “DeepFrame” lens concept in this video. The results can be seen a bit better in the linked video below:

You can look through a window frame and see augmented content place in the landscape or in your room. Perspective changes seem to work nicely. The frame itself seems to have a fixed position – no tracking/movement of the frame itself is shown. But as it seems it works well that multiple users can stand in front of the frame and have the matching perspective each. No tracking needed or limitations. Too good to be true? How about focus and convergence issues and stereo depth cues? Well, that`s not the point today. The idea is clear: use AR window frames could add extended data access to our life and also create new fun experiences – without the need to block our vision or pretty faces with dorky glasses.

Glassholes 2.0?

Talking about glassees. Google Glass (not being AR, but pretending to be back then) failed. Not only because of the missing use cases, the small screen or the missing tracking to achieve real AR – but for social reasons. Microsoft Research just showed a smaller pair of glasses, but Snap glasses are already kind-of a thing of the past again.

Today, glasses are too big (or even remind us of Dark Helmet), have too little battery life, to slim field of view, tracking is not yet world-scale without suffering under a too big spatial map triangle mass, etc. The existing glasses are a lot of fun for us developers, but are still not ready for mass market. Meta is kind of “soon” starting to ship their dev kits, we still don`t know how well gesture interaction will really work like without tactile feedback and all legal questions are not yet answered. How about work safety with a frozen blue screen of death on your MR glasses blocking your vision?

We just don`t have enough data to tell if smaller AR glasses would be accepted within the next years. I feel uncomfortable if a phone is pointed at me, what if the glasses camera is constantly observing? Will the next snap generation not care?

But even then, let`s say, this concept is widely accepted and noone cares anymore about privacy… how would the social experience be? If I see my virtual rabbit next to me, but my (real world) friend can`t see it? The idea of “glasses don`t take us out of reality” seems to be a fraud to me. We are even more distracted all the time! We create our own reality within our reality for us alone! … unless we share it. But how could we share our virtual assets if everyone is so keen on creating gated digital communities? Apple versus Meta versus Microsoft versus Snap versus Facebook versus some-small-open-source-crew… If we don`t create a common parallel digital space to share the whole AR glasses idea will die as an EPIC FAIL.

Never change a winning team?

Allright, got a bit carried away here. Getting back to technology issues to solve first. If glasses are still too far off for consumers? What to do? Well, again the smartphone will fill in the gap as the augmented window. Stationary solutions like DeepFrame work at the office, the train window or the theme parks, etc. They are a good medium to share digital information easily with others. It`s a good social experience that is also kind of reduced in presentation and invisible technology. I´m quite positive that we will see more of this style soon – after all we get the fully connected home already, where even fridges have touchscreens. But on-the-go we still need something portable.

![]()

With the likes of Pokemon, object recognition, Google`s AR translator and all the snap and facebook-styled augmented mirror apps we can see AR getting slowly but surely integrated into all consumer`s hands – without them even knowing the term AR. When Tango (or similar concepts) kick in on mobile devices we can see even more integration of the real world and virtual objects seamlessly. The new video by Johnny Lee let`s me believe once more that Tango needs to become a standard feature for new Android phones, asap!

The smartphone AR will be socially accepted, since everybody accepts people with smartphones in their hand all the time (not commenting on if this is good or bad). You can also easily share your digital data by showing the phone to your friend. – The smartphone as an augmented window to the mixed reality world. – The AR glasses in contrast would always be secret information that would scare people away (like in this odd marketing video). This could have bigger impact on the acceptance of AR glasses than previously thought, I`d say. Let me put it a bit more catchy, hehe:

“The concept of secret information in AR glasses will scare people away until we find a social solution to share digital data seamlessly with others. We must start working on open standards today.”

– Tobias Kammann, augmented.org

Change the winning team… step by step

So, what will happen next? When will we see AR glasses on the streets big time? The tech needs to shrink, the operating systems need to get ready, etc. But as said, the social component is the biggest hurdle or challenge. Probably we will keep using our AR-enabled phones during the next 10 years, adding some stationary AR screens in our environment. But when will we finally switch to AR glasses? It sure took around 10 years until everyone had a mobile phone and the distraction or the parallel texting to remote friends was accepted – when in social local situations. The same will probably happen to glasses. Technology updates could be way quicker, but social changes would take their time. If we make it right and offer a social experience that allows to share digital AR information – not blocking out people with other (or no) devices, it could be sped up.

… but then again: if AI develops faster and faster and takes care of all details of our lives… maybe we don`t need puny peasant-like HUDs anymore while running through the streets. Maybe it all runs in the background by then and we can be more social without digital helpers or interruptions. :-)