Scaling the metaio City!

Hey everybody, the insideAR is over and it’s time to revisit the conference. I was waiting a week longer to see some more videos pour into the web to quote here, since I couldn’t take videos myself… and just yesterday metaio uploaded their conference presentation materials! So, now it’s really the perfect time to blog about it! So, the insideAR had two great days fully packed with Augmented news from the Bavarian AR trophy company! I’ve uploaded a few shaky images to document a bit and to kick off with a few impressions:

For now you can find some more pictures on my flickr page.

The conference was held again in the Eisbach studios a bit outside of Munich city center with enough space for demos and more seats in front of the stage. It sure grew again during a single year! On a first glance, the focus seemed to stick with the mobile world, the improvement of mobile content handling (creation and initialization of tracking) and better support for hardware acceleration. Some demos stayed at a first glance similar to last year, but sure improved on the details. The complete list of interesting talks can be watched via metaio’s youtube channel now! So lean back and browse through those. :-) … and yes, I’m also presenting in the Industry talk video. I’d be happy to get your comments. Enjoy! But let’s go through some things now:

Product News

metaio presented many new details and process improvements and I didn’t have the time to see too many talks, but I think the overall message was clearly to make life easier for developers during the content creation, during the tracking setup and to move on with the Augmented City approach to a bigger scale. To cover more spaces and to get more accurate. Worth a look is the talk by VP of Development, Michael Kuhn (video above) on his news on metaio SDK, Creator and junaio. The overall progress integration into SDK got way better and no extra tools for learning or the startup phase of the tracking application is needed. Improvements for the developer and ease of use for the user in different areas (e.g. giving a continuous video feed scan for feature maps and QR codes, etc. – like other readers do already for bar/QR-codes). Although we might have seen SLAM integration or AREL before, it’s good to see improvements here.

Also new is a developers portal that just went online during the conference: dev.metaio.com.

Demos

This year, there were a lot of marketing demos and the usual suspects of Industry applications. Many demos showed funny, crazy or helpful ideas using the mobile SDK. Different partners of metaio showed their Apps using their license. The video below sums up some nice impressions. The best thing you could see everywhere, was the feature map generation on the fly within the same application. Tracking and interplay of different application bricks sure gets easier to set up.

Besides these shows and some (for not-first-time visitors well-known) Industry applications (Faro arm, process for car engine maintenance, window into the VR-world…) some hardware news were presented, including partner Vuzix, showing their latest prototype of a slim HMD, hopefully to be released in Q3 2013. The unnamed device within the Smartline category had a pretty slim formfactor: it ships two QuarterHD screens, one HMD camera (mono-recording only) and shows a 10 foot virtual screen, Wifi/bluetooth. The glasses hold their own Android OS hardware that is supposed to run autonomously or in conjunction with an in-pocket smartphone. While this idea (I’ve seen the same approach at ISMAR11 in a way bulkier device) seems intriguing, we don’t really now if this is necessary or will last on the market. The good thing would be the possibility to have no extra device and less lag due to no extra WiFi data transmission (for tracking and visualization). But since the device does not come with 3G, 4G mobile connectivity, this seems more like an approach for closed environments (like on construction sites or maintenance, overlaying offline helper information). And again, it’s not possible to adjust the focal distance for the virtual plane. I’m sure this will give us quite a few headaches for AR projection later on. Well, we will see one day! Can’t wait!

Research

Research-wise you could notice their focus on scale again. Demos running trials using a complete magazine with 100 augmented pages, SLAM calculations running on aerial footage to nicely reconstruct the camera trajectory and other demos to up-scale things could be seen.

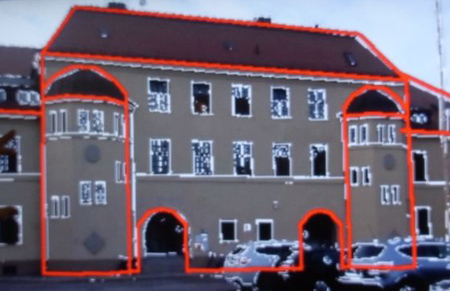

Intriguing was their new concept on helping out initialization of the first initial tracking pose by an edge snapping algorithm (see above). To help out a bad GPS signal, they align a coarse outline 3D model of a known building to the video feed and try to automatically snap it to the matching edges. Afterwards they can trigger the usual feature SLAM to continuously track with more information. Since a fully textured 3D reference would break up with changing light conditions, this seems to be a neat trade-off to automatize initialization easily. We will see where this leads, still only a prototype. Great to see though, that they invest time into this field. If we had more 3D reference models, the AR City would have an easier kick-off. Manual positioning and errors by a few meters are not a good base to actually blend the real and the virtual…

metaio sure made a good show, presented some great progress within their Software suite, especially making life way easier for the content developers. While still fighting with real stable outdoor 1:1 scale fully automatic tracking for the AR City, we can already see that they are on the right track to reach it and that they have their vision here! Can’t wait for 2013!

Well, so far for my wrap-up here. Enjoy the videos and see you soon with news on ARE2012 and ISMAR2012 information updates! If you have further comments or images, please don’t hesitate to share them with us in the social plazas!

That’s all for today! Have fun! :-)